Artificial Intelligence (AI) is revolutionizing the legal profession as we know it. AI-powered legal research software can be a great help to lawyers, paralegals, and other legal professionals. It can increase productivity, reduce costs, and improve accuracy. However, as with any technology, there are potential pitfalls and drawbacks.

In this blog, we examine a case where a lawyer representing a man who sued an airline and relied too heavily on AI to prepare a court filing and the consequences that followed.

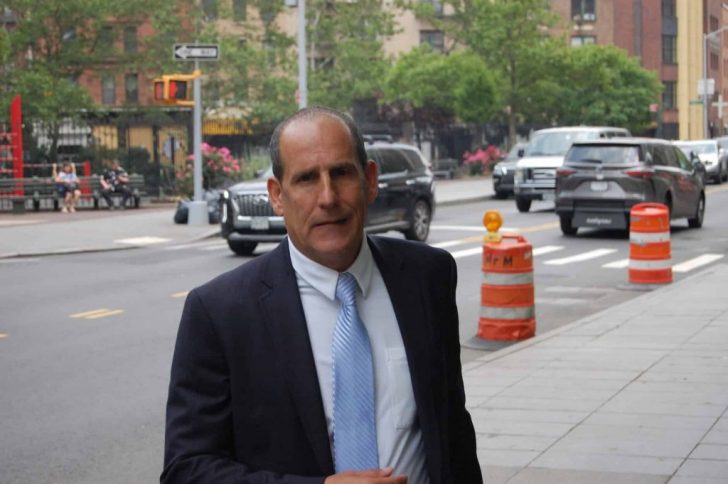

Attorney on Hot Water for Using ChatGPT

New York-based attorney Steven A. Schwartz found himself in hot water when he submitted a 10-page brief that cited more than half a dozen court decisions, including the following:

- Martinez v. Delta Air Lines

- Zicherman v. Korean Air Lines

- Varghese v. China Southern Airlines.

However, the problem was that the quotes cited in the brief were erroneous. And none of the judges or lawyers could find the court decisions referred to.

Schwartz admitted to relying on a program called ChatGPT to do his legal research. This AI-powered tool uses machine learning to generate text based on natural language input from users. However, it seems that the program created text that did not exist, or cited cases that were not relevant to the plaintiff’s claim.

AI-Driven Research Is NOT Infallible

This incident shows that while AI-driven legal research can be useful, it is not infallible. Court decisions, especially in complex cases, involve a lot of nuances and details that cannot be captured by a machine. Thus, relying merely on AI can lead to disastrous consequences.

Moreover, lawyers have a responsibility to ensure that all the information they present in court is accurate and properly sourced. An AI-generated brief may be efficient, saving lawyers time and effort.

But if it contains false content, it can undermine the lawyer’s credibility and harm their client’s case. Judges expect lawyers to do their due diligence in legal research and the fact that an AI tool was used to create a brief is not an excuse for an erroneous submission.

Another issue with using AI for legal research is the potential for bias. AI programs are only as impartial as their input data. If the program has been fed biased or incomplete data, it may generate results that reflect those biases or inaccuracies.

Parting Thoughts

Artificial Intelligence can be highly beneficial for legal research. It can sort through vast amounts of information in a fraction of the time it would take a human. Plus, it can identify patterns and relationships that might be missed by human researchers.

However, legal professionals must be aware of the limitations and potential pitfalls of using AI. It is important to verify the accuracy of any information generated by AI tools and to supplement it with conventional research methods. Likewise, lawyers must also be cautious about the potential for AI bias and select tools that have been rigorously tested for fairness and accuracy.